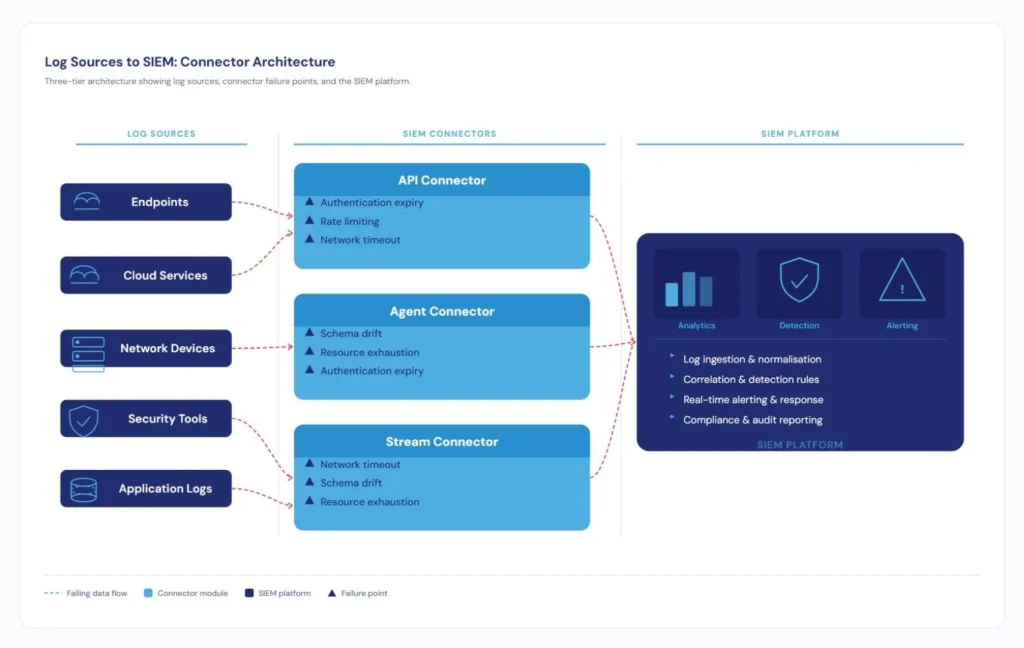

Security Information and Event Management (SIEM) systems serve as the central nervous system of modern security operations. Yet even sophisticated SIEM platforms are only as reliable as their data connectors.

A 2025 Picus Blue Report reveals a troubling reality: 50% of SIEM detection failures stem from log collection issues, with another 13% from configuration problems and 24% from performance bottlenecks.

When connectors fail silently, you’re not just missing logs. You’re flying blind in a threat landscape where the median adversary dwell time reaches 99 days. For organizations building resilient technology ecosystems, understanding connector failure modes and designing for reliability isn’t optional.

Five Critical Failure Modes

1. Authentication and Authorization Expiry

OAuth tokens expire after 90 days of inactivity. API keys rotate on security schedules. Service account permissions get revoked during audits. Each scenario breaks log ingestion, often without obvious indicators.

Microsoft’s Defender SIEM documentation specifically calls out client secret expiration as a primary failure mode, noting that organizations frequently lose initial secret values and must regenerate them through Azure portals. The detection window can span weeks.

2. Rate Limiting and Throttling

Cloud providers enforce rate limits to protect infrastructure. A connector polling AWS CloudTrail every 30 seconds works fine during normal operations but hits throttling during security incidents when log volume spikes.

Research from Uptrends shows API uptime fell from 99.66% to 99.46% between Q1 2024 and Q1 2025, representing 60% more downtime year-over-year. For connectors making thousands of daily API calls, this degradation compounds quickly.

3. Network Latency and Timeout Misconfigurations

Connectors with hard-coded 5-second timeouts work perfectly when cloud regions respond in 200ms but fail when cross-region latency spikes to 6 seconds during peak traffic. The pattern that catches teams: connectors configured in low-latency development environments fail differently in production where network paths traverse firewalls, proxies, and geographic boundaries.

4. Schema Drift and Field Mapping Failures

Log sources evolve constantly. Vendors add fields, deprecate others, and change data types without coordinating with SIEM deployments. CardinalOps’ 2025 State of SIEM report analyzed over 13,000 detection rules and found 12% broken due to misconfigured data sources and missing field elements. Your connector still ingests data, your SIEM still stores it, but your detection rules can’t parse the new structure.

5. Resource Exhaustion

Connectors compete for network bandwidth, memory, CPU cycles, and connection pools. As log volume grows, resource constraints emerge subtly. Five connectors each consuming 20% of network bandwidth individually can saturate links when running concurrently. Some events make it through. Others time out. Your SIEM has incomplete data with no clear indicator of which events are missing.

Designing for Reliability: Five Principles

1. Credential Lifecycle Management

Build authentication that assumes credentials will expire:

- Store credentials in dedicated secrets managers (HashiCorp Vault, AWS Secrets Manager, Azure Key Vault)

- Implement automated rotation with overlapping validity periods

- Monitor expiration dates and alert 30, 14, and 7 days before expiry

Authentication failure should be detected proactively through monitoring, not reactively through broken integrations.

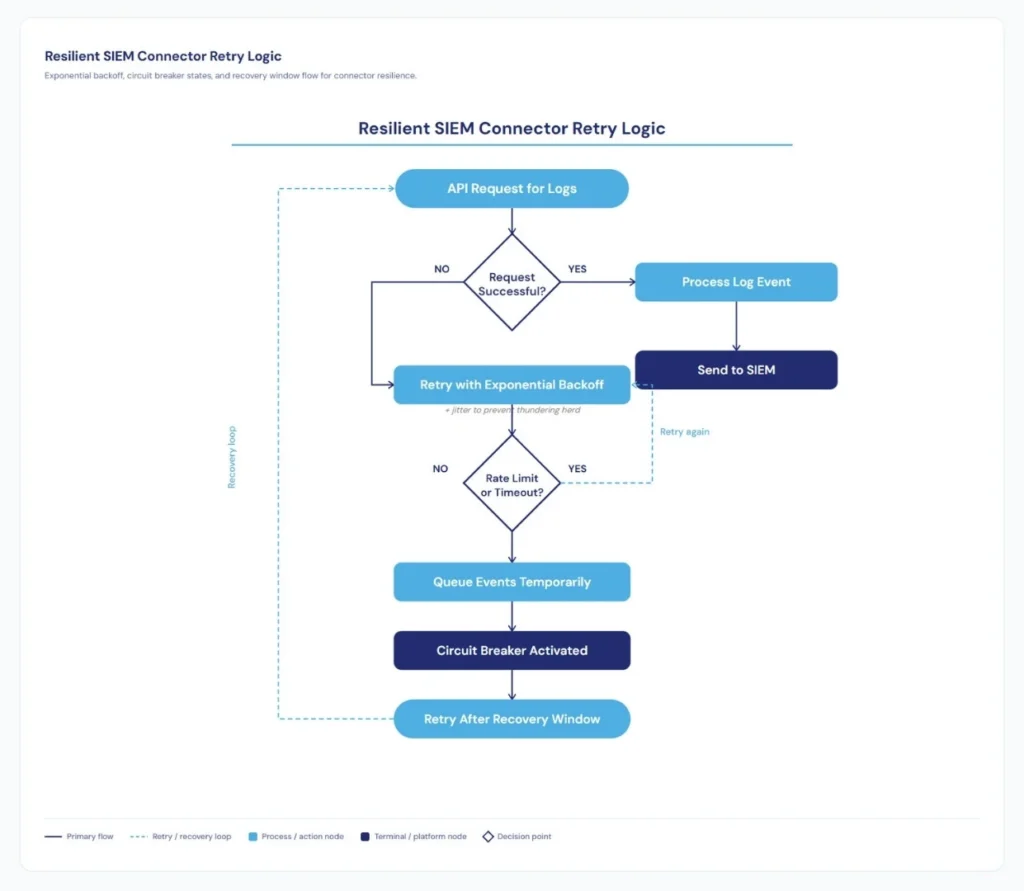

2. Adaptive Rate Limiting

Static retry logic fails in dynamic environments. Implement:

- Exponential backoff with jitter to prevent thundering herd problems

- Circuit breakers that fail fast when APIs are consistently unavailable

- Request queuing during rate limit periods with defined retention policies

Design for graceful degradation. When you must drop events, use priority schemes: security-critical events from privileged accounts deserve higher priority than routine authentication successes.

3. Resilient Network Communication

Network reliability requires more than timeout configuration:

- Connection pooling with health checks to detect stale connections

- Configure timeouts at multiple levels: connection, request, and total operation

- Monitor P50, P95, and P99 latency to detect degradation before timeout failures

A connector with 50ms average latency but 5-second P99 latency is failing for a meaningful percentage of requests, even if aggregate metrics look healthy.

4. Schema Validation and Versioning

Protect against schema drift:

- Validate incoming log structures against expected schemas

- Design parsers to gracefully handle missing fields

- Implement automated testing against sample data to detect breaking changes early

Accept that Windows Event Logs will add fields and cloud providers will introduce new service types. Your normalization logic needs to adapt without manual intervention.

5. Comprehensive Observability

You cannot fix failures you don’t know about. Build observability from day one:

- Emit detailed metrics: successful requests, failures, retry counts, latency distributions, bytes ingested, events dropped

- Implement heartbeat mechanisms verifying end-to-end pipeline health

- Alert on silent failures: when expected event volume doesn’t arrive within defined windows

If connector monitoring relies solely on self-reported status, you’ll miss failures where the connector thinks it’s working but events aren’t reaching your SIEM.

Production Deployment

Organizations operating reliable SIEM connectors deploy them as containerized services with resource limits and automated recovery. They maintain connector configuration as code in version control, implement staged rollouts for updates, and establish service level objectives for uptime and data freshness.

Most critically, they build runbooks for common failure scenarios. When authentication expires at 2 AM, responders need clear recovery procedures, not architectural discussions.

The Bottom Line

SIEM connector reliability isn’t theoretical. These are battle-tested approaches from organizations operating production deployments at scale. They work because they acknowledge a fundamental truth: failures are inevitable, so design for graceful degradation rather than perfect uptime.

The same principles that make microservices resilient apply to security data pipelines: loose coupling, defensive programming, comprehensive monitoring, and automated recovery.

The question isn’t whether your connectors will fail. They will. The question is whether you’ll detect those failures before attackers exploit the visibility gaps they create. That answer depends on the architectural decisions you make today.